Its been long. Had given up on the concept of blogs, an expression that is so informal and irresponsible at times, it was hard to fit that in my head. Perhaps another article on war and peace conversation with @Ashok and I’ve made peace with that conflict to say, some form of expression is better than none to begin with. As part of this article, will try and express things around authorization and the principles we used in one of the solutions we built a while ago(currently working on it to be made open-source, watchout for Ringfort). Also, a general narrative on where should it lie in the overall architecture, whether its a solved problem etc. Few of us @thoughtworks were fortunate enough to build a collaboration and knowledge management platform, from scratch, for one of our clients. Authorization was one of the key ingredients of that journey. Hopefully, a lot of this narrative makes sense. Would be happy to be challenged otherwise.

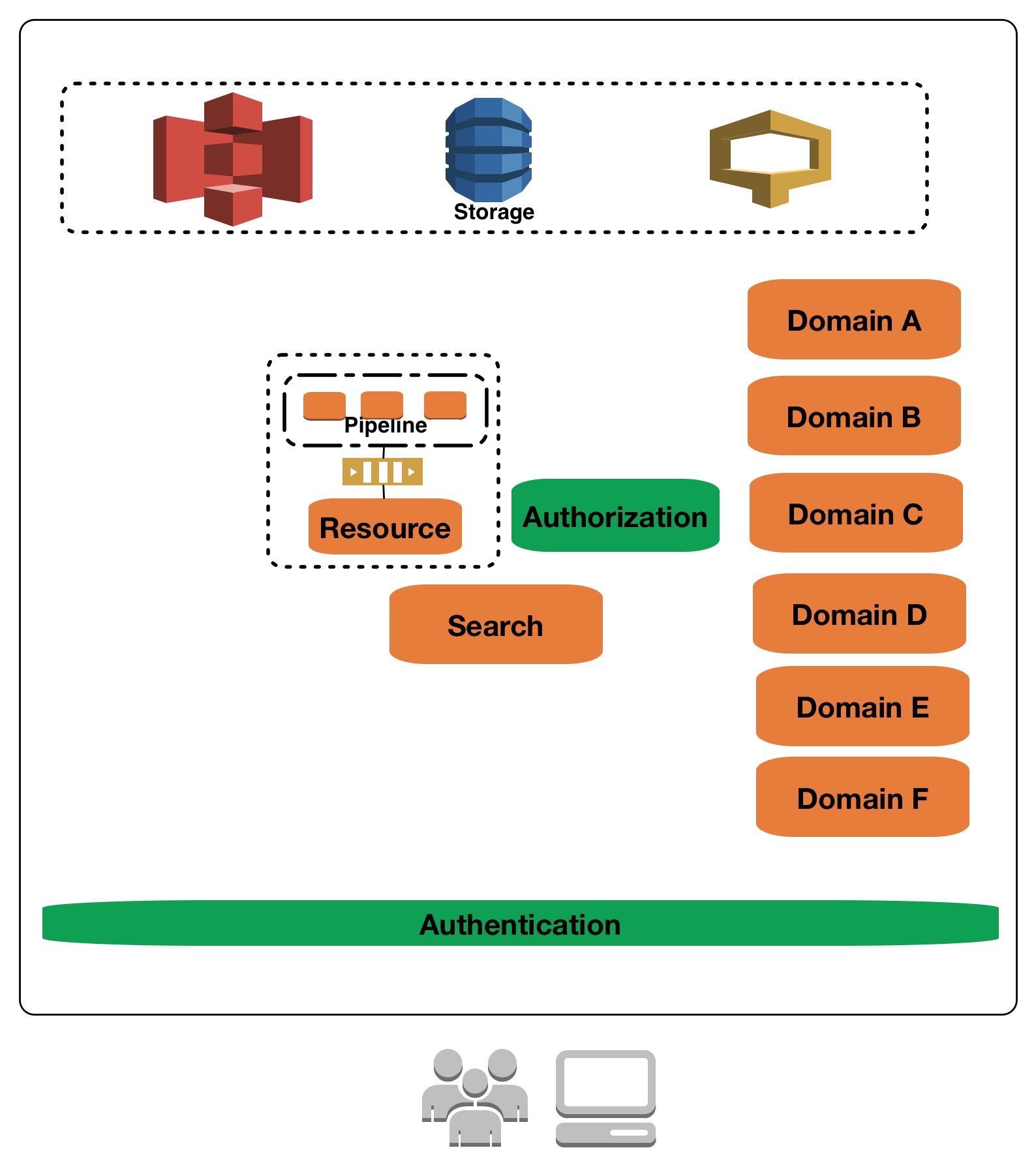

Caveats: None of these diagrams are for AWS architecture interpretation. I like the stencil as its easy to express everything that is essential very well, using these icons.

The confusion, nomenclature - Authentication vs Authorization

The facilitation of knowing whether and if a user is valid is the simplistic definition of authentication(authn). Once authenticated, what that user can or cannot do is the concern addressed by authorization(authz). From a definition standpoint, things seem fairly simple. Authentication is typically a edge concern and authorization is a concern closed to domain. You’ve got to think about how does it work when there is a unified view presented in search(active and passive authz) and a unified domain around resource and the mechanics of that in an authz-ed setup. It starts getting grey when you look at the landscape of various toolsets and frameworks which help you do some of that.

Note: Each box is a service which is self contained with its own data and evolution etc. Integration between these domain happen at the service/API vs storage. Although I don’t prefer using “Micro-Services” as a term(perhaps another post on that), yes, in coloquial sense they are micro-services

Story - Part 1

The convenience vs control battle

Dec-2013 when we were evolving this platform which I mentioned earlier and authn & authz were the concerns that were being looked at closely, the immediate response in a huddle was “these are solved problems, lets not re-invent the wheel”. Thats only partially true. We will come back to that later. Starting with that hypothesis, there were a bunch of tools which were considered for evaluation. Apache Shiro, OpenAM etc. One of the things that was not explicitly called out was that authn and authz are different problems. Although solved/provided by one tool(most of the time), they are still different. But again, our journey with these tools continue.

First tool we considered was Shiro. The internal community had seen a fair amount of success on a different back-office project for a retailer and we were building services on JVM, it was a reasonable consideration. One of the things which was evident immediately was the operational complexity of managing the service which was hosting authn.

Architecture and critique!

From an architectural stand point we called out a bunch of things:

- User and authn was clubbed together in a service. This was fine for password based login, but it would get complex for other forms of authn.

- Managing session, simply from an authn point of view, was going to become a problem on its own at scale. Although its a concern that needs to be handled, it was warranted at such an early stage in the evolution of the platform and there was no other need for session apart from managing user context.

- This was becoming a god service for all calls to pass through or call at. That wasn’t right for a fairly sizeable services setup which was there back then.

Introduce Authz

One of the glaring things about Shiro’s Authorization framework was the expression of “permissions” as strings and the query matching wildcard things on the “realm”. We were building a multi-tenanted platform which had to be flexible to accomodate a lot of changes and adopt to best software delivery practices. Chosing LDAP et.al was out of question. Firstly, they are not flexible to change, secondly they don’t scale very well in the sense that we would want it to in a multi-tenanted setup of hundreds of thousands of users potentially per tenant. It had to be something which would be a queryable “realm”, and here is where things become a bit complex. Managing this realm which is friendly to string based expression was going to become a problem either way. Also, changes to these permissions which are dynamic, would be hard to track without an immutable store. Its not that it couldn’t be done, but the value of control outweighed the convenience that was provided by the framework given we were not going to use majority of the best things in it.[1]

Back to square one, evaluation part 2. [OpenAM], with a lot of recommendations from a lot of good software people was an immediate hit. It had the promise of expressing different forms of authn but also had the promise of support a “robust” access control framework.

Principles

- Control over convenience at scale(variety and volume)

- Domain and authorization over authentication and authorization

Story - Part 2

The convinience vs control battle - continues, with added complexity

This was q1-q2 of 2014 when the true colours of OpenAm started to emerge! From issues on thread safety to api stability, @naughty_genius and @vignesshv probably never spent more time thinking about anything in their illustrious career than just getting authz in OpenAm to work.

From an authn point of view, OpenAM is probably one of the saner solutions out there which does the thing it claims to do very well. Infact, it eased some of the integration concerns which were there when it comes to multi-tenancy. Recommended tool for authn concerns. But, authz, we faced numerous issues. Some of the features were not fully ready back then. Especially around remote policy creation and query. XML expression added to the problem where at scale, ser-de for different queries was getting problematic. Some of the bizzare things which lead to frustrations included the fact that the documented API didn’t work as expected and we were for a small duration doing unacceptable things like, load a jsp and post data to it, use the cli instead(yes, you read that right, exec from a java process!! Nightmare!) etc just to get things working. From a modelling point of view, there were couple of things which were limiting in OpenAM. Modelling group of groups/sub-groups was one of such examples. From a performance standpoint, even when we patched and got the API bit working[2], there were issues on handling cuncurrent users(mere 50-100 concurrent users would almost kill OpenAM. That is unacceptable for a collab-esque platform). Although we did some form of RCA for all of that, the cost of fixing and cost of not having flexibility was something that was very glaring, especially given the business requirements which were lined up for further releases.

Architecture and critique!

One of the things that was getting me uneasy was the passing of tokens all around the suite of services and that token resolution happening for a call in some domains and not in other domains etc. e.g. User domain hosted authz concern and the resolution was a concern that was imposed into that domain. Also, authn was also a concern that was owned by user as a domain, which was again another reason why token resolution and from a scale point of view it was becoming a bottleneck.

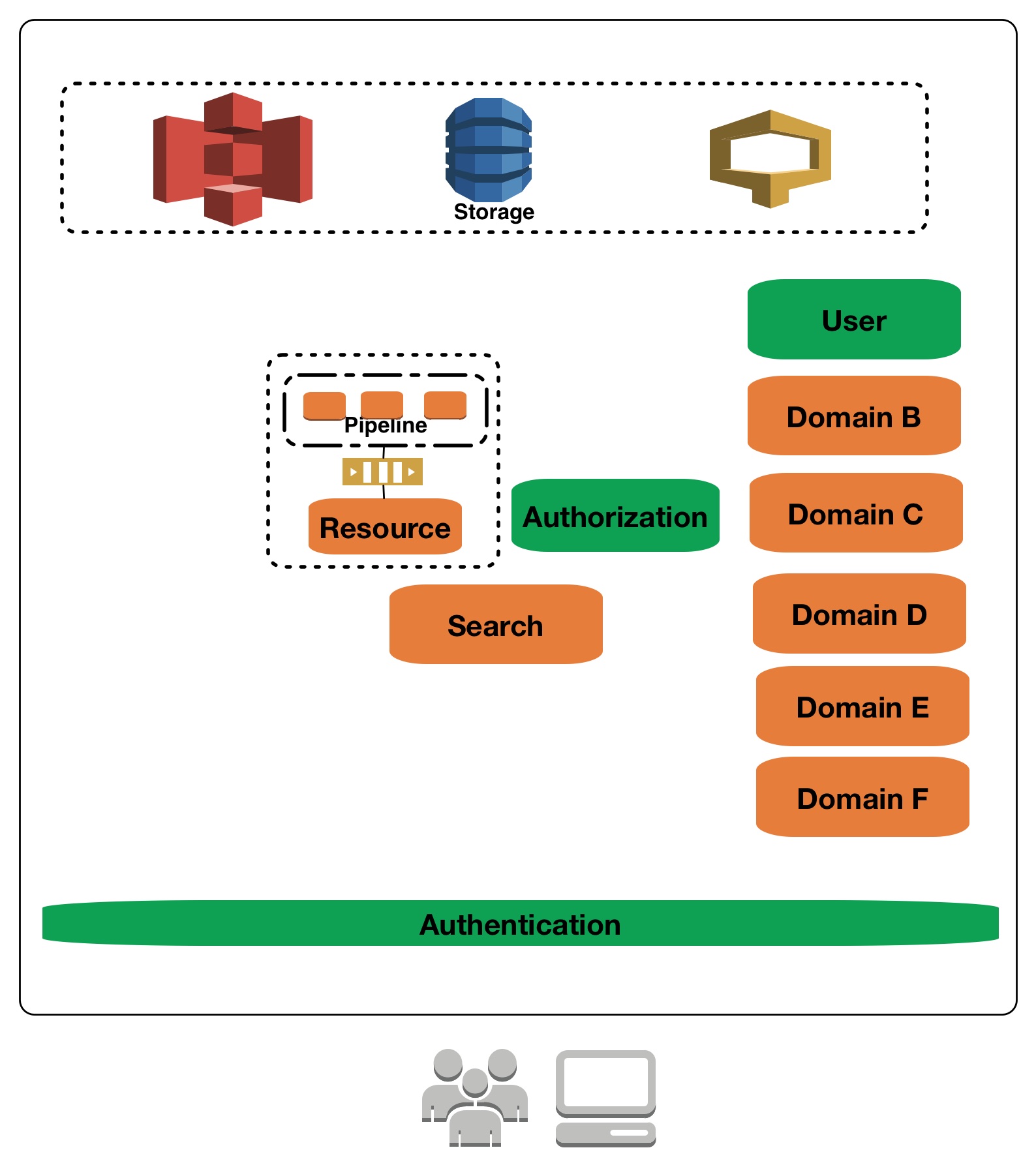

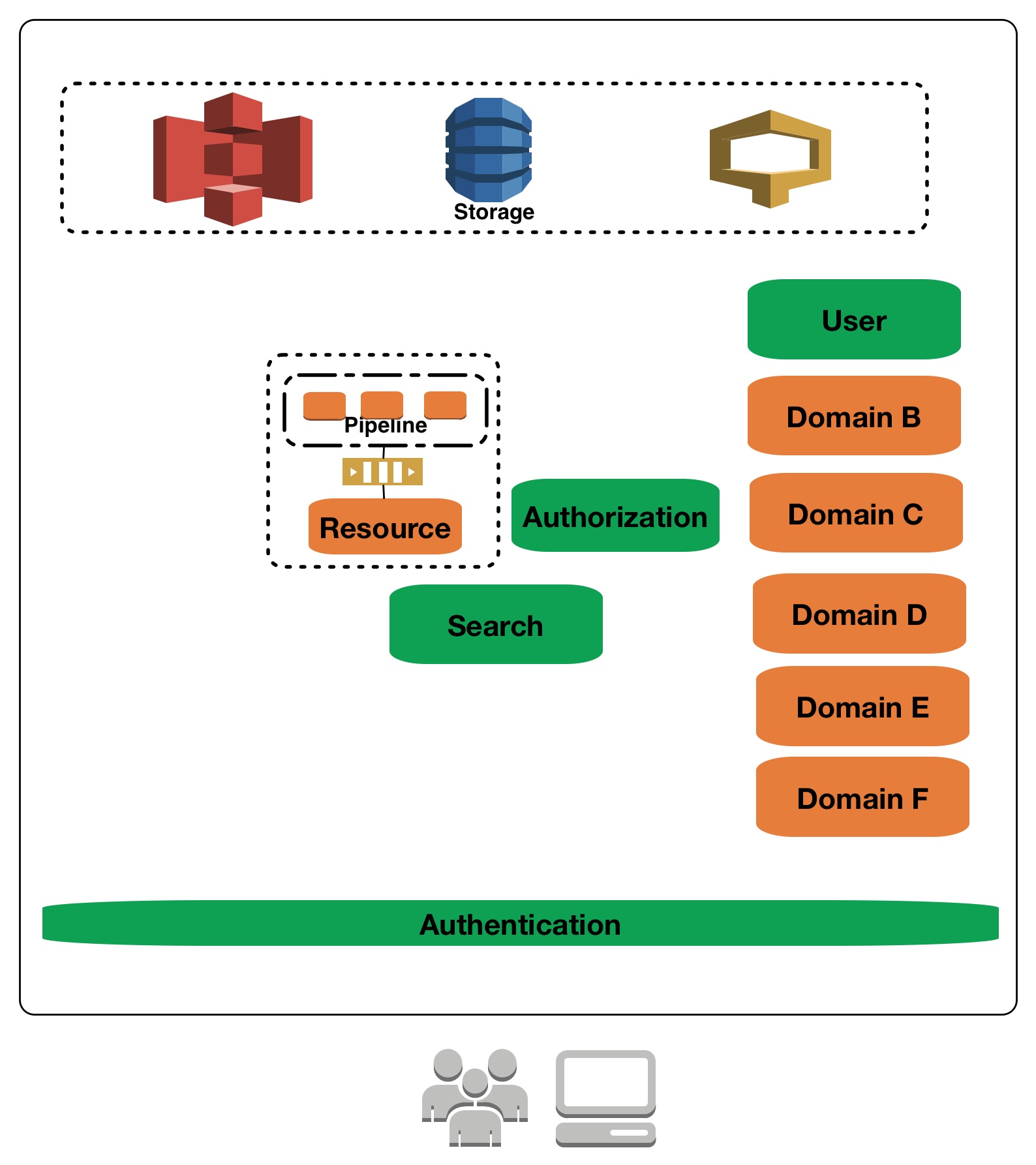

More generally, identity, typically encompasses source of truth for user attributes, authn and authz. From an architectural sense, they are different heterogenous concerns. User and atrributes are very business specific and should be considered a self evolving, business centric domain as opposed to a peripheral concern. Also, authn does need to rely on certain attributes, but they must be a consequence of the domain defining what these attributes must be. One of the glaring things for us was, trying to change schema for a tool which was handling authn to accomodate plenty of business attributes so that things were at one place. Our recommedation would be to keep them separate, as the attributes for authn concern is different(& typically a sub-set) when compared to the domain of user. Also, there is a conception of attribute based authz(ABAC) which commonly gets played into the mix, (going to defer that to another post, ABAC- a false sense of ease, flexibility and security) while reasoning with attributes in authn solution etc. As a middleware for doing authn, its better if we let it do the thing that it does best and not bend it beyond breaking point. Which means, user as a domain exists which is disconnected from what the authn mechanism is. Authn mechanism would facilitate things like managing passwords securely, lifecycle of passwords, multi-factor nature of authn, delegation and other protocols involved. User as a domain on the other hand includes things which are relevant for a particular business problem. e.g. user having a reading preference and education details, food preference etc, are very specific to the business in consideration. Its best managed by a domain which handles what these business requirements are and not clubbed with a concern which is managing authn or authz. This also helps in evolution of sub-domain which are very close to a user e.g. expertise, profile recommendation, skills etc.

Lastly, if its within the boundary of a business platform with multiple different domain services then its probably a good idea to look at authn, authz and user(Identity) domain as different concerns. Having said that, separating them for the sake of separation or pallelization doesn’t help as some of the services are very close to the evolution of other business domains e.g. social authoring(blog, posts etc) and the concerns around authz is not something that can be pre-empted but has to evolve with authz as a concern. The thing about authz is not the ease of use or expression, but its the ease of anwering the permission questions and why of permission especially when they are changing dynamically and have multiple change mechanisms.

Principles

- Domain and authorization over authentication and authorization

- User as a domain over user managed by authentication

Business requirements

Story - Part 3

The convinience vs control battle - continues, in a different shape; build vs buy

With all the “advancements” happening around the painful situation with authz, business was looking to do plenty more which would effect authz. This included functionality around sharing resources with a community, implied access due to a heirarchy which was in play because of many reasons, notion of trail of permission changes who, why, how and when etc. Even with OpenAM working without any problems, modelling these things would have a. Not been performant and b. not been very resonable(why did one get this permission, how and when) from a business standpoint.

Another beast which was in play was “authorised search”. Fairly involved full text search capablity with filter, facet etc which had to emit results iff one was allowed access to it. This is where a bit of nuance comes into play, active and passive authz for search. In the active case, user does not see results if they are not supposed to see, hence, actively showing authorized results only. In the passive case, the enforcement of authz is not at a search result level but on explicit access. The latter model simplifies things from an authz point of view where search and results are un-aware of any restrictions and on access(which can easily be made a domain concern) applies authz lazily. This works in certain class of problems e.g. a course comes up in search result but can only be accessed if you’ve registered for it(learning management domain). But, is detrimental in certain cases e.g: personal documents, sensitive documents of people etc in a enterprise collaboration setup doesn’t fit very well to the passive approach. Most of the usecases in our platform was falling under the usecase of actively doing authz.

With all of these in play, we were at a point where we were trying to make a decision as to whether we should use a tool which does authz(like Shiro, OpenAM but not them), perhaps a paid one?, or should that be build ground up. In our situation, the decision was fairly simple, build it because we didn’t want to get into this yak shaving in another two months if we found problems in that other solution. But if we were not in this situation, we probably would’ve moved differently. My recommendation would be to assess whats the convinience the tool under consideration is bringing in and what is the long term value of that convinience.

Source: http://www.burkard.it/comic-strips/

If there is less value in that, then its probably not a bad idea to re-invent that wheel the right way(circular one perhaps!)

Architecture and critique!

Active authz brings about a bunch of challenges, especially when the physical storage mechanism is different for source of truth and search, there is an inherent challenge as to apply authz across these mechanisms. Textual query concern, search spanning across multiple domain(unified search) with a homogenous quality of response are some of the reasoning behind making search in itself a separate concern, a service. There is again a battle between managing concerns well. In a typical federated model where search and authz is handled by each domain as a first class concern, which we saw in the early phases of the project(q4-2013), there is a concern of heterogeneity creeping into reasoning with permissions. Also, some of the implementations can get extremely shallow where its harder to reason with it beyond code, coceptually. That was a good enough reason for us to centralise interpretation of authz but the control of definition, change and check/query was with the domains. Very similar model was applied to search and hence the centralization. Also, from a product-suite point of view, managing search(ant authz) as a separate concern helps managing infrastructure and capacity plan better. More on this particular topic in another post - Federation vs centralization and battle of biggies.

From an architectural standpoint, the ability for domain to express what permissions meant for them was essential but at the same time handling concerns around scale, search etc was something that had to be diligently managed. One of the ways of managing this search concern was to have something in search on which things could be filtered on. It had to be conceptually representative of the authz domain but at the same time play well with the search(Elasticsearch in our case) nuances[3]. One of the things the search solution had to be aware of was the fact that there existed an additional filter that it needs to apply(“must” filter) and the values for this filter needs to come from authz solution.

Conceptually what does it mean?

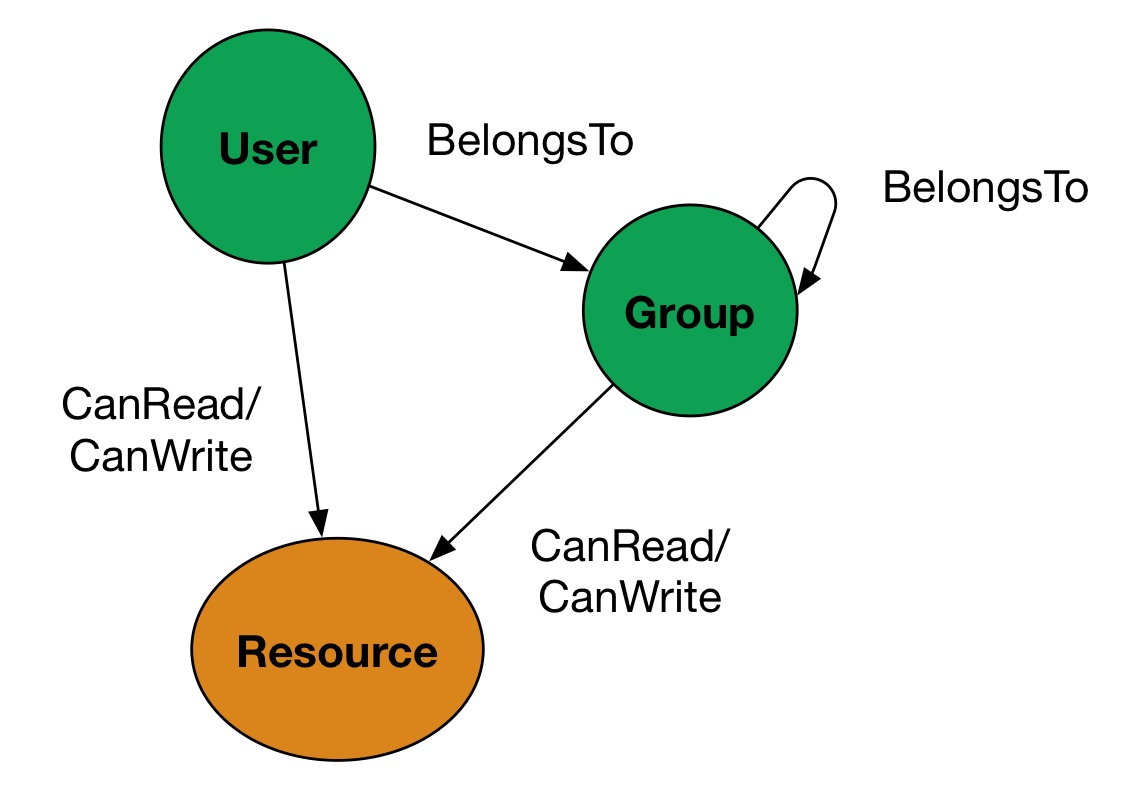

For every query with a user context, there had to be minimal information about the user that had to be queried from authz which can then be matched against all the resources in the “types” and filtered to get authorized results. This essentially has two things, 1) during writes, for every resources, some level of access control list(ACL) atleast for read needs to be part of search 2) during query, the querying user should be representated suitable with all their representations so that they can see the result by virtue of atleast one of their representation[4], more generally, subject representations.

Having said that, this sets a bunch of expectations from authz solutions to answer ACL question and Subject Representation questions. One of the things, during performance evaluation, which we observed was that OpenAM was not so good at answering the subject representation question if there were too many groups that a user belonged to. This will become more clear once I present the solution.

The solution

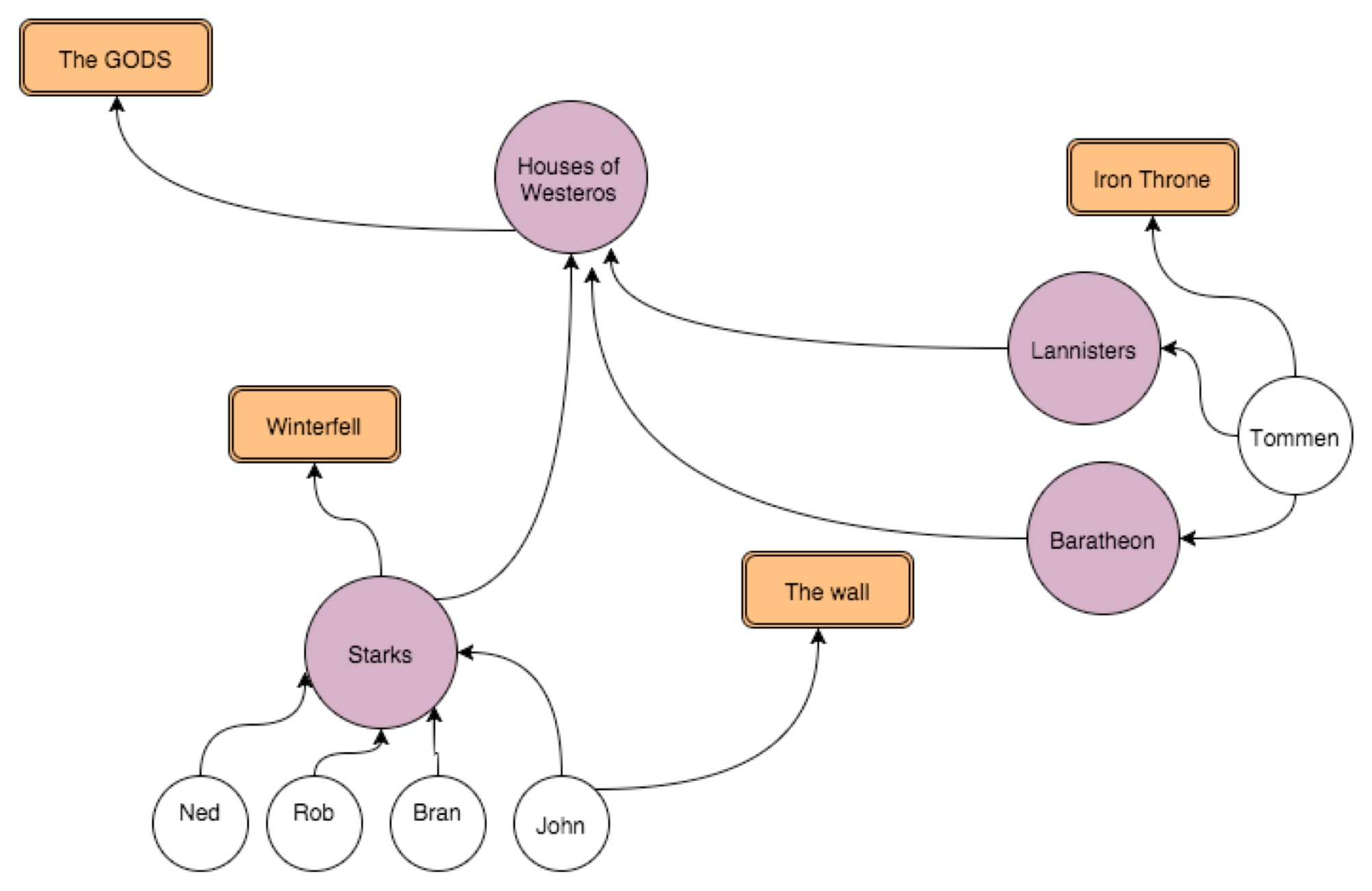

There was a need to model something at a very primitive level which could be related very well for all domain needs. Good thing was we didnt have to invent something. OpenAM’s expression was fairly reasonable every things which needs access control is a resource and entities trying to access are either a user, group or collectively subject. Also, there was a need to manage heirarchy of subject structure. Access is something that is defined by a relationship between subjects and resources. One of the more intuitive ways of representing this relationship was using a graph.

- Subjects - Users and Groups; have access to Resources either directly or via the groups they belong to i.e. group x has access to resourceA and if I belong to group x then I can access resourceA

- Groups can be part of other groups and for a hierarchy of groups

This isn’t true for OpenAM alone, the same abstraction holds good for linux file permissions or any other RBAC - Role based access control mechanisms.

Translations/Interpretation of the diagram when modelled in authorization

- Starks rule winterfell - Starks have write access to resource winterfell

- Rob is part of winterfell - Rob can access winterfell because he is a stark

- John commands the wall - John has write access to the wall

- Rob does not command the wall - Rob does not have write access to the wall

- Houses of Westeros have gods - Houses of Westeros have read access to gods

- Starks have gods - Starks have read access to gods

- Tommen has god - Tommen has read access to god

- Starks are part of Houses of Westeros - Starks belong to group House of Westeros

- Lannisters are part of Houses of Westeros -

- Lannisters belong to group House of Westeros

- Tommen is a Baratheon on his dad’s end - Tommen belongs to Baratheon

- Tommen is a Lannister on his mom’s end - Tommen belongs to Lannisters

- Tommen rightful owner of Iron Throne - Tommen can read/write iron throne

Why not graph?

Modelling - conceptual

Software design

Clients

Nuances journalling

Integration

Architecture again

Summary

Appendix or Naive Wisdom

[1] Shiro: We integrated one of the domains and ran a bunch of tests to run into those conclusions. Was a longer(2-3) weeks spike/POC exercise is how I would read it now.

[2] My memory is getting flaky as to whether we patched it or compiled it differently. I remember a couple of conversations with the OpenAM team and then we got some bits of the API working.

[3] Being one of the early adopters of Elasticsearch within thoughtworks, it eased a lot of the scale and modelling concerns. Also certain features allowed for incrementally evolving search as a functionality. @skatta has written about some of the work that he did in 2012 here and a lot of the learnings from there carried over to this product-suite team

[4] Database people reading this would be banging their head as to why am I describing JOIN in such terms. Another thing about being in a micro-service setup is that you delay join to a layer beyond database and hence careful evaluations must be put in to see the effects of join. In our case, the challenge for us was to keep the smaller half of the table/document space/set in the query so that the join is cheaper and can effectively be managed without fetching results from the store. More on this in another post - Microservice, understand data mechanics before venturing in